We hear it all the time: to build impactful products, follow the build-measure-learn feedback loop. But while that sounds clear in theory, it can feel very fuzzy in practice. Tracker would like to share a case study example of how we implemented this process in iterating on our recent Reviews feature.

To learn more about why we decided to build Reviews in the first place, check out our previous blog post about the initial release.

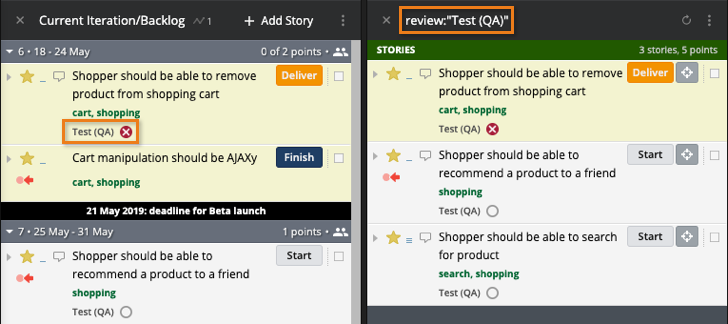

Let me cut to the exciting stuff: based on user feedback and some data analysis, we have released the following iterations to our Reviews feature:

Woohoo! We can’t wait to hear how your teams are using these iterations. Please keep sharing feedback so we can keep learning!

Now, for the story of how we got here.

We released reviews to all users at the end of January (after lots of building, measuring, and learning around an MVP version released in the fall).

One of the most important things to remember about the build-measure-learn loop is that thinking about these steps has to happen at the same time, while executing on these steps happens more in sequence. When we decided to build reviews, we simultaneously decided what we were going to measure when we released them, so we could learn whether or not they were solving the problems we designed them to solve.

Once reviews released into the world, we started measuring how the feature performed against the metrics we had outlined. Our metrics were both qualitative and quantitative; a few examples of our quantitative measurements include:

We also listened for qualitative user feedback. In fact, we were so eager to learn what users thought that we embedded a “Provide Feedback” link directly into the feature for the first few months it was out.

Our support team was instrumental in the learning part of build-measure-learn. They gathered, categorized, and organized hundreds of pieces of feedback from users. With that, they were able to help us visualize feedback clustering around certain common themes. Based on this clustering, we were able to prioritize our biggest iteration opportunities.

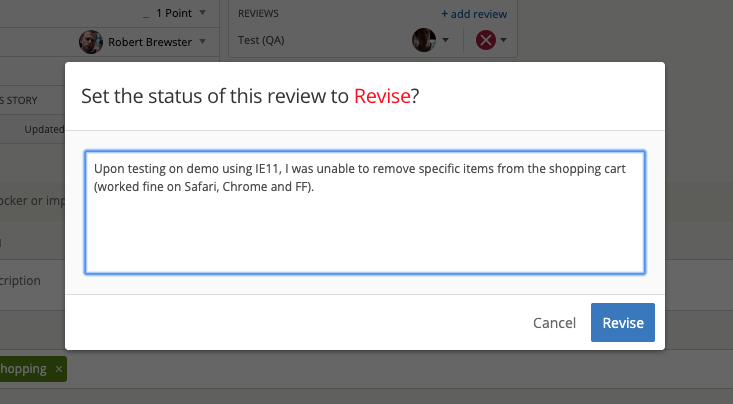

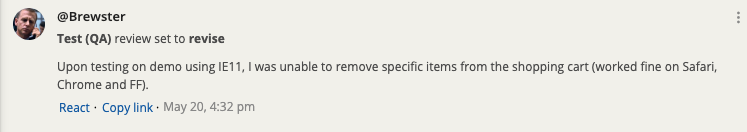

First, reviewers wanted to be able to explain why they had set a review to pass or revise as part of their flow. Before this iteration, a reviewer would have to set the review status, then make a separate comment explaining their thinking. Now, reviewers can seamlessly add a comment as part of the reviewing process. This addition helps close the loop between a reviewer and whoever needs to make the additional changes to get a story accepted.

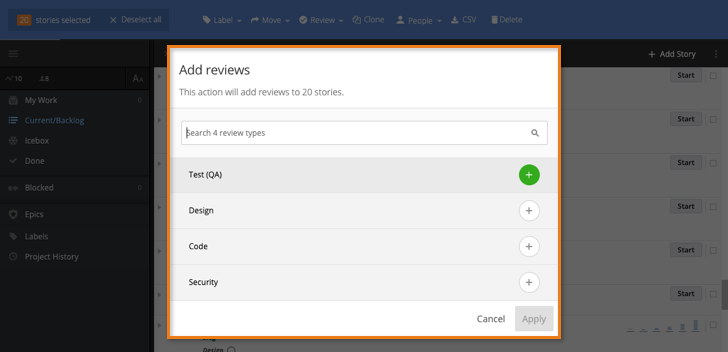

Second, users requested that a set of reviews to be auto-added to all new stories. This would have been a substantial engineering effort, so we contacted some of these users to understand the problem this would solve for them. Interestingly, users rarely needed the exact same set of reviews added to every single story, instead they usually needed some combination of reviews added to most of their stories. We learned that if we had implemented exactly what users asked for, we would have actually created a new problem: users needing to remove reviews from the stories where they weren’t necessary. Instead, we decided to include reviews in the bulk actions menu so that users can just add the reviews they need to the stories that need them, with minimal effort.

We consider this a strong example of how to use the build-measure-learn loop: “learn” in build-measure-learn does not mean “learn exactly what your users are asking for so you can build it;” it means “learn why your users are asking for something, then build something that solves their problem.”

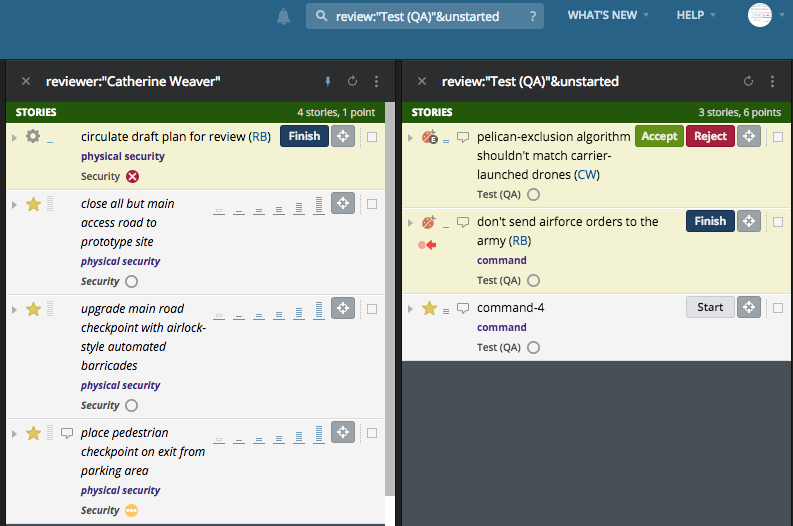

Third, users wanted to search for reviews of a certain type in a certain status (in our initial release, search worked independently for type and status). This change solves the problem of a designer needing to see all stories with unstarted design reviews, for example, or a product manager needing to see all stories with failed exploratory testing reviews.

Once these areas had been identified and prioritized, we designed new mocks, usability tested with users who had requested the changes, and once those were validated, we were ready to jump right back into the Build phase.

Now that these iterations are built, we are excited to measure their impact and learn what more iterations we can provide in the future.

To learn more about Reviews please visit Reviews: Representing the entire team . You can let us know what you think about these changes, or any others you’d like to see by using the Provide Feedback widget under Help in Tracker, via Twitter, or by emailing us.

Category: Updates Productivity