The Tracker team as a whole takes responsibility for building in quality and making sure that necessary testing activities are done along with other development tasks. But we testers bring our own special value to the party, and we’re seeking another great tester to help us as we work to deliver the best possible product to our customers. Let’s walk you through a typical day of testing on the Tracker team.

Breakfast!

Every day begins with a catered breakfast, followed by standups. Our Tracker team is getting pretty large, around 30 people as I write. To work in optimally sized teams, we’re divided into “pods” of up to four developer pairs each, plus designers, a tester, the product owner, and help from Customer Support and Marketing. A quick all-hands standup is followed by pod standups to balance communication and collaboration within and between pods.

In the five-minute all-hands standup this morning, one of the other pods announced they plan a release today. Sara, one of our designers, reminded us about the design critique scheduled tomorrow.

Next, in another short standup for the pod I’m on, I mentioned a failing build that’s blocking testing. A developer pair volunteered to look into the failure. I didn’t need any other testing help today, but it was available if I did. Our Tracker team currently has only two testers, but the POs, developers, Customer Support specialists, and designers all help with various testing activities.

Later, in our quick Test/Support team standup, I offered to help with the regression testing for the other pod’s release. There weren’t too many support tickets coming in, so Nate, our Support Lead, also volunteered to help.

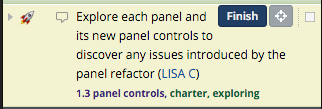

After standups, I realized that the release branch hadn’t been deployed for final regression testing yet, so I checked my pod’s Tracker projects to see what was ready for testing. Each of our features is usually represented by an epic in Tracker, made up of multiple small stories. I write exploratory testing charters for each feature. Today, enough stories were done for our new shared search feature that I could start exploring. I clicked the Start button on the first charter to begin.

An exploratory testing charter in Pivotal Tracker

I explored the user experience of the new feature and compared it for consistency to other parts of the UI. I asked Sara, the designer, to pair with me to verify that it looks correct in the different browsers. She decided to tweak some images and went to talk to a developer about it. I did another charter to explore using different roles and personas. I think we missed a use case, and after discussing it with Matt, our product owner, I wrote a new story for it. I made notes in the charter story about what I learned while exploring.

I needed a break, so I joined some teammates in a doubles ping pong match. After that, it was time to start on regression testing for the other pod’s release. We put regression test checklists from a template into a Tracker chore to make it easy for a few of us to share the work. Our CI has extensive automated tests from the unit level up to the UI level, so our confidence level is high, but we like to make doubly sure. Depending on which part of our app we’re releasing, we do additional automated checks as well as some manual checks of our UI, integrations with third-party products, and other testing as needed. Today’s release was for our Platform. I ran a Postman collection to double-check that the API’s functionality and performance looked correct. I then checked that the release didn’t cause issues with our iOS push notifications or Google integration. Nate and Jo, our Test and Support Manager, completed the rest of the checklist and the release is ready to go.

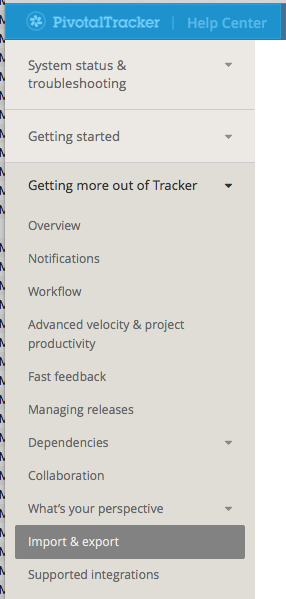

New Help menu

After lunch, I worked on some articles for our new Knowledge Base. We’re writing it from scratch to replace our existing Help pages with searchable, easily navigated information. I made some changes suggested by our Content Manager, Steve, and added in some illustrations from our Senior Designer, Monique.

While working on the articles, I helped one of our support specialists reproduce a problem that a customer reported with one of our API endpoints. Later, a pair of developers asked me to review some Behavior-Driven Development (BDD) scenarios with them to see if can think of any additional cases to cover.

Next, we had our pre-Iteration Planning Meeting (pre-IPM). I got together with our pod’s PO, designer, and development anchor to discuss stories that the whole pod will estimate in a couple of days when we hold our weekly IPM. We use a “Three Amigos” approach (using the term coined by George Dinwiddie), only we have four amigos! We example map each story, specifying rules and examples of desired and undesired behavior, and note any questions that come up.

Amigos collaborating

I learned about example mapping from Matt Wynne at a recent conference. We started trying it several months ago as an experiment. The resulting rules and examples help make sure we all share a basic understanding of each story as we start discussing it in the IPM. The developer pair who works on the story later will use the rules and examples to come up with scenarios for the behavior-driven acceptance tests that guide their coding along with the Test-Driven Development (TDD) they do at the unit level. They use Cucumber and Capybara to write the BDD tests. When all the TDD and BDD tests are green, the story should meet all the acceptance criteria. The developer pair will also do some manual exploratory testing on each story. Example mapping, BDD, and exploring are contributing to a significant decrease in our story rejection rate and cycle time.

Our Test/Support team retrospective is scheduled for tomorrow. I looked at the action items assigned to me from the last retro and the measurements we came up with to see how much progress we made. One goal from our last retro was to improve upon the visibility of when and where the code for any given story is deployed, so we know where we can test it. I checked the recently finished stories and the test environments where they were deployed, and saw there were still a few “gray areas.” The PO and I discussed this with one of the developers on our Toolsmiths team to see what can be improved in the automatic deploy process, and wrote a story to add this.

To be fair, not every day goes this smoothly or is as productive. Stuff happens! We might get sidetracked by a production issue, or I might spend an entire day on one activity such as working on the Knowledge Base. There are always new experiments to try. For example, we’d like to have testers and developers pair more often, but it’s a bit difficult right now with only two testers. We’re planning to automate more of our manual acceptance and regression testing to limit the amount of time needed for manual regression, and free up more time for exploring. We’d also like to do more to transfer testing skills to developers. I recently facilitated an exploratory testing workshop at our weekly Tech Talks office lunch, but we could do much more to have POs, designers, and developers writing and exploring their own test charters.

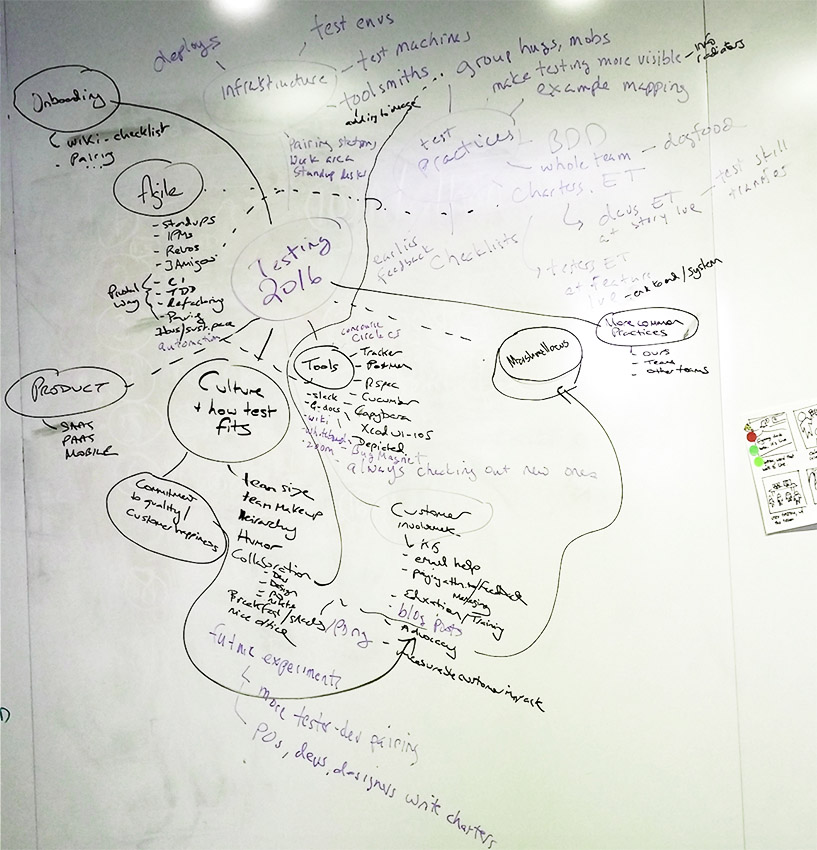

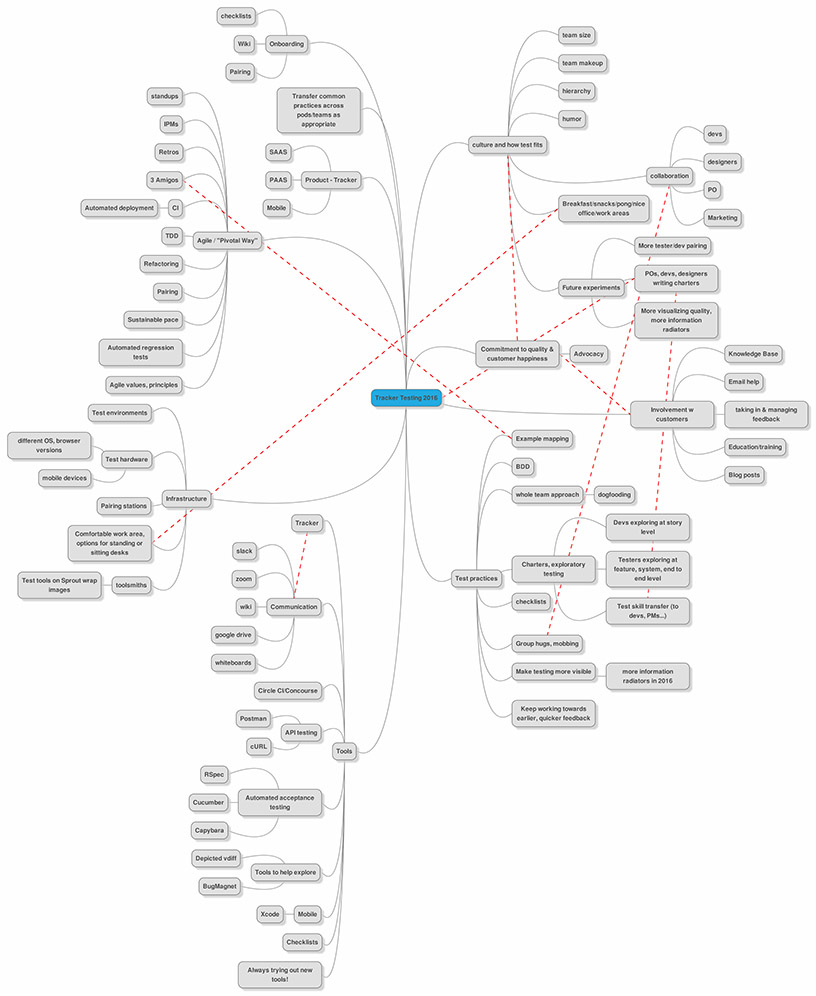

Our mindmap for testing in 2016 reflects our team culture around testing, along with the practices, tools, and infrastructure that support it. The heart of testing in Tracker is the commitment that our team—and indeed, our company—has to quality and customer happiness. A key part is our direct involvement with our customers through our email support and user feedback process.

We brainstormed where we are and where we’d like to go this year.

We captured our mind map in an online collaboration tool for further discussions.

Does the idea of this work day sound exciting to you? Great! We hope to find another adventurous tester to join us in our journey to learn more and keep improving the quality of Tracker. As you can see from the mind map, we have endless areas to explore!

Category: Productivity